Q/KDB+ Blog

Welcome to the Q/KDB+ Blog. Here you’ll find articles exploring various aspects of the Q programming language and KDB+ database.

Latest Articles

| Title | Published | Description |

|---|---|---|

| Sym File Maintenance in KDB+ | Mar 9, 2026 | If your sym file is in need of maintenance, look no further. |

| Recursion vs Iteration in Q/KDB+ | Feb 12, 2026 | Examine the intricate details of recursion and iteration in Q/KDB+. |

| Database Maintenance in KDB+ | Feb 04, 2026 | An in-depth look into how to maintain you datase. |

| KDB-X Modules | Nov 25, 2025 | Learn the basics of the KDB-X module system. |

| Performance Costs of KDB+ Attributes | Aug 15, 2025 | Explore the performance costs that KDB+ attributes can have. |

| Performance Benefits of KDB+ Attributes | Aug 4, 2025 | See what performance benefits can be realised when using KDB+ attributes. |

| KDB+ Attributes | May 6, 2025 | Understand the basics of attributes in KDB+. |

| Floating-Point Datatypes in Q/KDB+ | Feb 28, 2025 | Master floating-point datatypes in Q. |

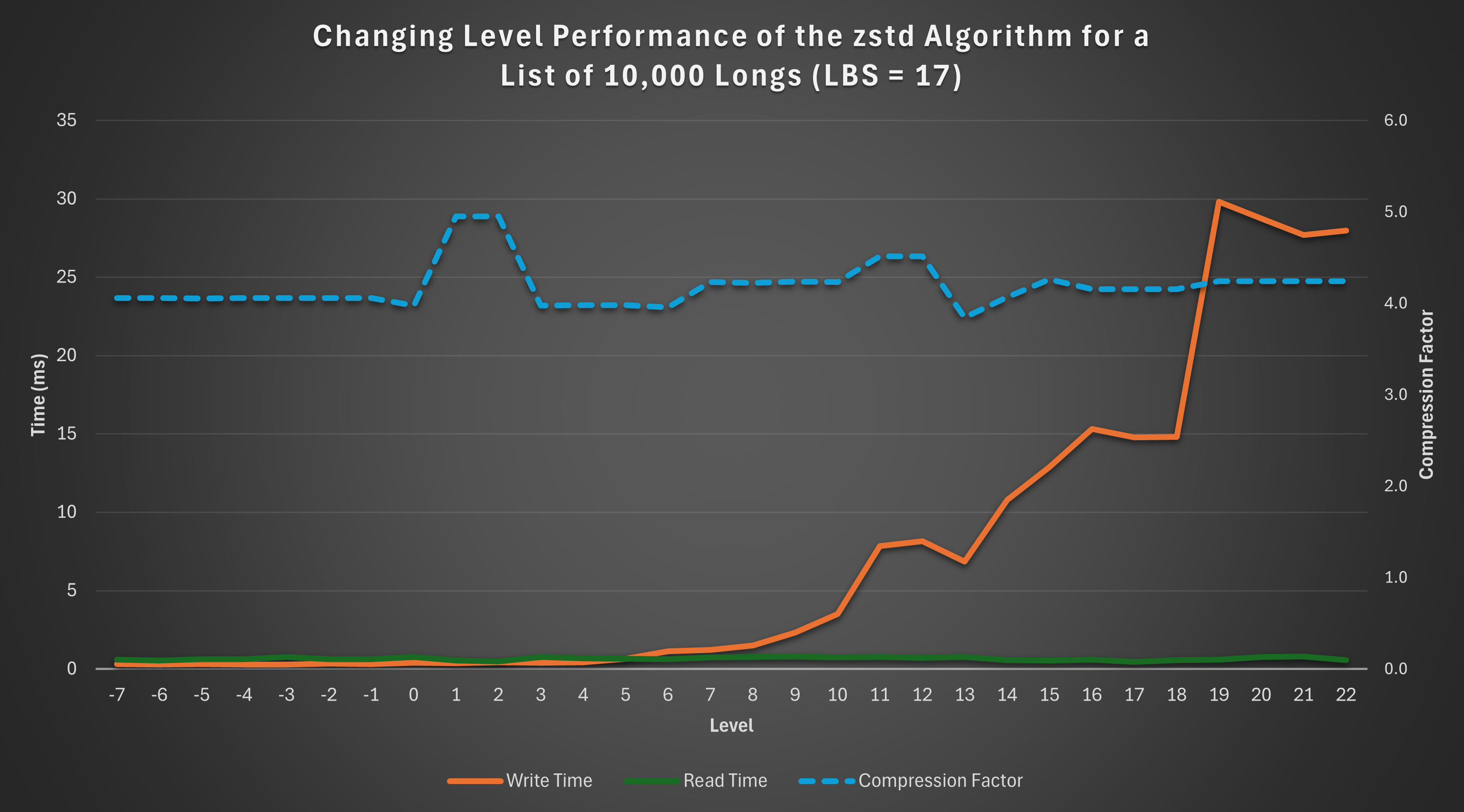

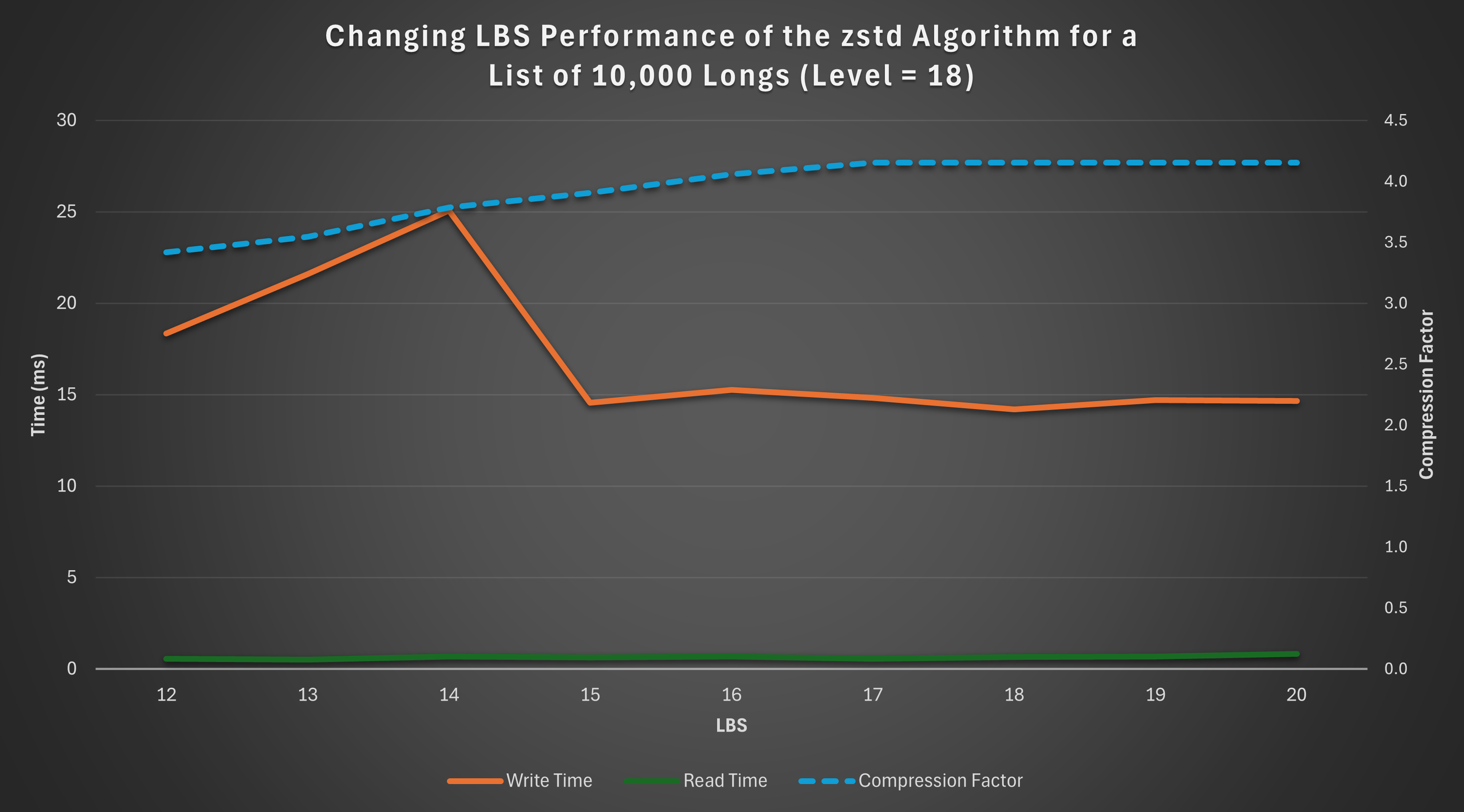

| Measuring Compression Performance in Q/KDB+ | Jan 31, 2025 | Find out how to measure the performance of different compression algorithm and settings. |

| An Introduction to Compression in Q/KDB+ | Oct 13, 2024 | A detailed look at how compression works in Q. |

| Command Line Arguments in Q/KDB+ | Sep 20, 2024 | Discover how to customise Q session behavior using command line arguments. |

| An Introduction to Interacting with REST APIs in Q/KDB+ | Sep 11, 2024 | Learn how to interact with REST APIs using Q, including HTTP GET requests, HTTPS, and handling responses. |

| The Little Q Keywords That Could | Aug 15, 2024 | Explore lesser-known and underutilised Q keywords that can be powerful when used effectively. |

| Analysis of Q Memory Allocation | Aug 15, 2024 | Delve into how Q handles memory allocation using the buddy memory allocation system. |

Feedback & Questions

If you spot an issue, have a suggestion for improvement, or want to ask a question about my blogs, feel free to get in touch. I’m always happy to hear feedback — especially when it helps make things clearer, more accurate, or more useful for others.

You can reach me via email at jkane17x@gmail.com.

Sym File Maintenance in KDB+

The sym file is one of the most critical components of a KDB+ database. It provides significant improvements in both memory efficiency and query performance. However, to preserve these benefits, the sym file must be properly maintained. Excessively large sym files can negatively impact database load times and overall system performance, making it important to keep them as compact as reasonably possible.

This blog examines the considerations involved in database design to minimise sym file growth. It also explores practical techniques for reducing the size of an existing sym file when it becomes excessively large.

This post builds on the concepts introduced in my previous blog on general KDB+ database maintenance. If you are unfamiliar with those fundamentals, I recommend reading that post first, as many of the practices discussed there form the foundation for effective sym file maintenance. Additionally, the code described throughout this blog is available within the dbm.q module.

Warning

Operations on sym files can fail for many reasons. To avoid data loss, always back up your database before performing any of the operations described in this blog.

Symbols and Enumeration

In KDB+, the symbol type is an interned string. This means only one copy of each distinct string value is stored in memory, and all references point to that single instance.

On disk, this concept is implemented through enumeration. Enumerating a list of symbols converts each symbol into an integer index referencing a unique list of symbols known as the domain. This domain is stored in the sym file.

Symbol columns in splayed or partitioned tables must be enumerated. Instead of storing the symbol values directly, these columns store integer indexes into the domain. When the database is mapped into memory, these indexes are resolved back to their corresponding symbol values transparently.

Enumeration provides several important benefits:

- Reduced memory and disk usage — only one copy of each unique symbol is stored, while references use fixed-width integer indexes.

- Improved query performance — integer comparisons are significantly faster than string comparisons.

- Efficient memory mapping — fixed-width representations improve cache efficiency and access speed.

However, enumeration also introduces operational complexity:

- Tables must be properly enumerated before being written to disk.

- The sym file becomes a critical dependency; corruption or inconsistency can lead to severe data integrity issues.

- The sym file grows over time as new unique symbols are added. This growth, often referred to as sym file bloat, can significantly increase database load times.

Why Sym File Maintenance Matters

The sym file (or files) is loaded into memory when a database is opened. Its size therefore directly impacts startup time and memory usage. In systems with high symbol cardinality, such as trade identifiers or user-generated strings, the sym file can grow rapidly.

Uncontrolled growth can lead to:

- Slow database startup times

- Increased memory consumption

- Reduced overall system efficiency

- Longer recovery times during failover or restart

Proper design and ongoing maintenance are essential to prevent these issues.

Design Considerations

When designing a database, the choice of datatype for columns is an important consideration. A common decision is whether to use the symbol or string type for values containing alphanumeric characters. In many cases, it may not be immediately obvious which type is most appropriate.

In practice, the following guidelines can help inform that choice:

| Symbol | String |

|---|---|

Values contain only alphanumeric, underscore, or dot characters (0-9A-Za-z_.) | Values contain spaces or special characters (which can make certain operations more cumbersome) |

| High repetition of values | Low repetition of values |

| Column frequently used in comparison operations | Column rarely used in comparison operations |

Note

Forward slashes can be used in file symbols without any cumbersome handling. However, in such cases the use of a symbol is usually deliberate, as the value is intended to represent a file path.

You may also encounter identifier columns that are highly unique but frequently used in comparison operations. In such cases, consider using the GUID type, which provides efficient comparisons without introducing large numbers of entries into a symbol domain.

Creating a Decision Function

We can create a helper function that analyses a list of values (provided as strings) and suggests whether they should be stored as strings or symbols.

First, given a string x, we check whether it contains any characters that are unsuitable for symbols:

hasBadSymChar:{0<count x except .Q.nA,.Q.a,"_."}

Example:

q)hasBadSymChar each ("abc";"def";"g.h";"i_j";"k@l")

00001b

If any value in the list contains such characters, the column should remain a string:

any hasBadSymChar each vals

Measuring Uniqueness

Next, we compute the uniqueness ratio (also called relative cardinality):

\[ \text{Uniqueness Ratio} = \frac{\text{Number of Unique Elements}}{\text{Total Number of Elements}} \]

In q:

(count distinct vals)%count vals

A ratio of 1 indicates that all values are unique, while a ratio approaching 0 indicates a high level of repetition.

The interpretation of this ratio depends on the dataset, but one possible guideline might be:

| Ratio | Cardinality |

|---|---|

| > 0.9 | Very high |

| 0.5 - 0.9 | High |

| 0.1 - 0.5 | Medium |

| 0.01 - 0.1 | Low |

| < 0.01 | Very low |

However, the ratio alone does not tell the full story. Consider the following examples:

| Total Values | Distinct Values | Uniqueness Ratio |

|---|---|---|

| 10 | 5 | 0.5 |

| 1,000,000 | 500,000 | 0.5 |

Both datasets have the same ratio, yet the second introduces 500,000 unique symbols into the domain. Depending on the application, this may still be considered very high cardinality.

For this reason, we should also consider absolute cardinality (the number of distinct values).

Combining Relative and Absolute Cardinality

We can define both relative and absolute thresholds. If both thresholds are exceeded, the values should remain strings; otherwise, they can be stored as symbols.

(relThreshold<uniqueCount%count vals) and

absThreshold<uniqueCount:count distinct vals

The final helper function returns the values either as strings or symbols depending on these checks:

decideType:{[absThreshold;relThreshold;vals]

$[

any hasBadSymChar each vals; vals;

(relThreshold<uniqueCount%count vals) and

absThreshold<uniqueCount:count distinct vals; vals;

`$vals

]

};

Example:

decideType[10000;0.5;] ("abc";"def";"ghi";..)

Here, the absolute cardinality threshold is set to 10,000 and the relative cardinality threshold to 0.5. This means that values will be stored as symbols only if:

- the uniqueness ratio is below

0.5, and - the number of distinct values is below

10,000.

Otherwise, the values remain strings.

When choosing the absolute threshold, consider how many additional symbols your current sym file can reasonably accommodate. The relative threshold can help anticipate future growth patterns in the data.

This method provides a simple heuristic for datatype selection. In practice, the final decision may also depend on query patterns, expected data growth, and domain-specific considerations. Nevertheless, applying these checks provides a useful baseline when designing a database schema.

Converting Between String and Symbol Types

In the previous section we discussed how to decide whether a column should use the string or symbol type. However, in many cases the schema of an existing database has already been established and such decisions have already been made. Over time, requirements may change and you may decide that a column would be better represented using the other type.

In this section we look at how to convert a column from symbol to string and from string to symbol.

From Symbol to String

A column can usually be cast from one type to another using castCol provided by dbm.q. However, converting a column from symbol to string requires a slightly different approach.

Instead of casting directly, we use fnCol to apply the string function to the column values.

When operating directly on the column file on disk, a symbol column does not contain the symbol values themselves. Instead, it contains the enumeration indexes into the symbol domain. Therefore, before converting to strings we must first map those indexes back to their corresponding symbol values.

The lambda passed to fnCol therefore looks like this:

{[db;vals] $[20h=type vals; string (get db,key vals) vals; vals]}[db;]

Here vals is the list of column values.

If vals is an enumeration type (since KDB+ 3.6, enumerations always have type 20h):

- The domain file is loaded using

get db,key vals - The enumeration indexes are mapped to their symbol values

- The resulting symbols are converted to strings using

string

If the values are not enumerated symbols, they are returned unchanged.

Avoiding Repeated Domain Loads

fnCol may apply the operation to many column files in a partitioned database. If we load the domain file inside the lambda, it would be reloaded for every partition, which can be inefficient if the domain file is large.

To avoid this, we determine the domain once and reuse it.

However, identifying the domain file is not completely straightforward because a table may exist in many partitions, each containing a copy of the column file. To determine the domain name we inspect one of these column files.

A common approach is to use the most recent partition, which can generally be assumed to be in a consistent state.

We first create a helper function to obtain the table directory from the most recent partition.

// Get the table directory from the most recent partition

mostRecentTdir:{[db;tname] last asc allTablePaths[db;tname]}

allTablePaths (provided in dbm.q) returns every path to the table across all partitions.

Sorting these paths and taking the last value gives the most recent partition.

This also works for splayed tables, since allTablePaths will return a single path.

Determining the Domain Name

Once we have the table path we can inspect the column file to determine the domain used by the enumeration.

// Get the name of the domain used by the given column

colDomainName:{[db;tname;cname]

$[20h=type vals:get mostRecentTdir[db;tname],cname; key vals; `]

}

If the column is an enumeration type, the domain name is obtained using key. Otherwise, the function returns a null symbol.

Symbol to String Conversion

We can now implement the function that converts a symbol column to a string column.

// Convert a column of symbol type to string type

symToStrCol:{[db;tname;cname]

if[not null domainName:colDomainName[db;tname;cname];

fnCol[db;tname;cname;string get[db,domainName]@]

];

}

Since the column type is already verified in colDomainName, the lambda passed to fnCol does not need to check the type again.

Note

After this conversion, many values in the sym file may no longer be referenced by any column. These redundant symbols remain in the domain and can contribute to unnecessary sym file growth. We will discuss how to remove these unused values in a later section.

From String to Symbol

Converting from string to symbol is more involved.

The process requires:

- Casting the column values to symbols

- Adding any new symbols to the domain

- Converting the symbols to their corresponding domain indexes

- Writing the enumerated values back to disk

Because we must enumerate the symbols against a specific domain, the function requires an additional argument dname, which is the name of the domain.

Loading the Domain

We begin by loading the domain file into memory.

dfile:.Q.dd[db;dname]

domain:get dfile

Iterating Over Column Files

In a partitioned database, the column will exist in many partition directories. Each column file may contain different symbols, so the domain may need to be updated as we process each partition.

For this reason the operation cannot safely be parallelised, since concurrent updates to the domain could lead to inconsistent enumeration values.

Instead, we iterate over each column path and update the domain incrementally.

Loading and Casting Column Values

For each column path we first verify that the column exists.

has1Col[tdir;cname]

If the column file exists we load the values and cast them to symbols.

vals:`$get cfile:.Q.dd[tdir;cname]

This cast works for string columns, but there is an important edge case when the column is of type char.

If a char list such as

"abc"

is cast directly using `$, the result will be a single symbol

`abc

instead of three individual symbols.

To ensure that each element is converted separately we use an explicit each-right:

vals:`$/:get cfile:.Q.dd[tdir;cname]

This works correctly for both char and string inputs.

Updating the Domain

Any new symbols encountered in the column must be added to the domain.

domain:domain union vals

Converting Symbols to Domain Indexes

We convert the symbol values into indexes of the domain using the find operator ?.

domain?vals

Since the column may contain many repeated values, we optimise this operation using .Q.fu, which applies a function only to the unique values and then expands the result back to the original shape.

.Q.fu[domain?;syms]

Example:

// Without optimisation

q)3*1 2 1 2 1 1

3 6 3 6 3 3

// Only two multiplications are performed (3*1 and 3*2), and the results are reused

q).Q.fu[3*;1 2 1 2 1 1]

3 6 3 6 3 3

Writing the Enumerated Values

The resulting indexes are assigned the domain using !:

dname!.Q.fu[domain?;vals]

The updated column values are then written back to disk.

.[cfile;();:;dname!.Q.fu[domain?;vals]]

Helper Function

This logic can be encapsulated in a helper function that converts a single column file.

// Convert a column from string type to symbol type

strToSym1Col:{[domain;tdir;cname;dname]

if[has1Col[tdir;cname];

domain:domain union vals:`$/:get cfile:.Q.dd[tdir;cname];

.[cfile;();:;dname!.Q.fu[domain?;vals]]

];

domain

}

The function returns the (possibly updated) domain so it can be reused when processing additional column files.

Complete Conversion Function

We can now construct the full function that processes every column path in the table.

// Convert a column from string type to symbol type

strToSymCol:{[db;tname;cname;dname]

dfile:.Q.dd[db;dname];

domain:strToSym1Col[;;cname;dname]/[get dfile;allTablePaths[db;tname]];

dfile set domain;

}

After all column files have been processed, the final domain is written back to disk.

Cleaning a Sym File

Databases evolve over time. Tables and columns are added, schemas change, and older structures are eventually removed. When symbol columns are dropped, the symbols that were referenced exclusively by those columns may no longer be used anywhere in the database. However, their entries remain in the sym file.

This results in a sym file containing many unused values. While functionally harmless, these unused entries waste space and can significantly increase database load times.

The solution is to clean (or compact) the sym file by identifying and removing unused symbols. The first step is to determine which symbols are still referenced.

Identifying Used & Unused Symbols

To identify unused symbols within a database, we must first determine which symbols are actually in use. We can then filter the domain to exclude those unused entries.

Used Symbols in a Single Splayed Table

Assume we have the path to a splayed table stored in the variable tdir. First, we retrieve the list of column names:

getColNames tdir

getColNames is a helper function defined in dbm.q that takes a splayed table directory path and returns the list of column names. For example:

q)getColNames `:splayDB/trade

`time`sym`ex`size`price

We are only interested in columns of enumeration type (20h).

To check a column’s type, we must load it:

get tdir,col

We can then test whether the column is an enumeration:

20h=type get tdir,col

Example:

q)20h=type get `:splayDB/trade,`price

0b

q)20h=type get `:splayDB/trade,`ex

1b

If a column is enumerated, we can determine its domain name using key:

// ex column is enumerated against the domain called sym

q)key get `:splayDB/trade,`ex

`sym

Multiple Domains Caveat

Although uncommon, it is possible for different columns in the same table to be enumerated against different domains.

For example, in the database splayDBMulti, the trade table has two enumeration columns — sym and ex — each using a different domain:

q)tdir:`:splayDBMulti/trade

q)key get tdir,`sym

`sym1

q)key get tdir,`ex

`sym2

The corresponding domain files are stored at the database root:

q)key `:splayDBMulti

`s#`sym1`sym2`trade

To account for this possibility, we return a dictionary mapping domain name to used indexes, rather than a flat list of symbols.

Using splayDBMulti as an example:

q)tdir:`:splayDBMulti/trade

q)map:([])

Extracting usage from the sym column:

q)show enum:get tdir,`sym

`sym1!0 1 2 3 4

q)map[key enum]:value enum

q)map

sym1| 0 1 2 3 4

Now for the ex column:

q)show enum:get tdir,`ex

`sym2!0 1 0 2 1

q)map[key enum]:value enum

q)map

sym1| 0 1 2 3 4

sym2| 0 1 0 2 1

Avoiding Overwrites

If another column is also enumerated against sym2, naïvely assigning would overwrite existing entries:

q)enum:`sym2!2 3 0 2 1

q)map[key enum]:value enum

q)map

sym1| 0 1 2 3 4

sym2| 2 3 0 2 1

Instead, we must append:

q)map[key enum],:value enum

q)map

sym1| 0 1 2 3 4

sym2| 0 1 0 2 1 2 3 0 2 1

Since we only care about distinct indexes, we refine this using distinct:

q)map[key enum]:distinct map[key enum],value enum

q)map

sym1| 0 1 2 3 4

sym2| 0 1 2 3

Even better, we can use union, which is equivalent to distinct + append (,):

q)map[key enum]:map[key enum] union value enum

q)map

sym1| 0 1 2 3 4

sym2| 0 1 2 3

Completing the Function

We now iterate over all columns using over (/), building the domain map incrementally:

// Build a mapping of domain name to indexes of used symbols in the given splayed table

domainUsed1:{[tdir]

{[tdir;map;col]

if[20h=type enum:get tdir,col;

map[key enum]:map[key enum] union value enum

];

map

}[tdir]/[([]);getColNames tdir]

}

Examples:

q)domainUsed1 `:splayDB/trade

sym| 0 1 2 3 4 5 6 7

q)domainUsed1 `:splayDBMulti/trade

sym1| 0 1 2 3 4

sym2| 0 1 2

Applying to Multiple Partitions

As described in a previous blog, we can wrap this to operate across partitions:

// Build a mapping of domain name to indexes of used symbols in the given database table

domainUsed:{[db;tname] (union'/) domainUsed1 peach allTablePaths[db;tname]}

domainUsed1 is applied in parallel (peach) across all table directories. The result is a list of dictionaries, which we combine using union' and /.

Example of union':

// union is applied on a key by key basis using '

q)(`sym1`sym2!(0 1 2;3 4 5)) union' `sym1`sym3!(2 6 1;7 8 9)

sym1| 0 1 2 6

sym2| 3 4 5

sym3| 7 8 9

Unused Symbols

Once we know which indexes are used, finding unused indexes is straightforward.

We generate all possible indexes using til:

til count get db,domainName

Then remove the used indexes:

(til count get db,domainName) except domainUsed[db;tname] domainName

To apply this to all domains:

// Build a mapping of domain name to indexes of un-used symbols in the given database table

domainUnused:{[db;tname] except'[;used] (til count get db,) each key used:domainUsed[db;tname]}

Note

domainUnusedonly includes domains that have at least one used symbol. If a domain does not appear in the result, it is completely unused by the table.

Identifying unused symbols is not strictly required to rebuild domains, but it is useful for assessing whether compaction is necessary.

Complete Domain Usage

To compute usage across the entire database, we apply domainUsed to every table in our database (using listTabs provided by dbm.q) and combine using (union'/):

// Build a mapping of domain name to indexes of used symbols in the given database

domainUsage:{[db] (union'/) domainUsed[db;] peach listTabs db}

Persisting the New Domain(s)

After identifying the used indexes, we rebuild the domains in two stages.

1) Resolving Domain Indexes

We convert index mappings into actual symbol values:

// Convert a domain mapping from index values to symbol values

resolveDomainMap:{[db;dm] ((get db,) each key dm)@'dm}

Example:

q)resolveDomainMap[db;] domainUsed[db;tname]

sym| IBM AMZN GOOGL META SPOT L O SI

2) Persisting to Disk

Domains can be written using set:

q).Q.dd[`:temp;`sym] set `IBM`AMZN`GOOGL`META`SPOT`L`O`SI

We generalise this:

// Save each domain as a file containing the symbol values

persistDomainMap:{[dir;dm] (.Q.dd[dir;] each key dm) set' dm}

Important

The

dirparameter should not initially be the database root. Always write rebuilt sym files to a temporary location first. Only after successful re-enumeration should they replace the originals.

Rebuilding Domains

We combine all steps into a single convenient function:

// Recreate all domains of the given database with only the used symbols

rebuildDomains:{[db;dir] persistDomainMap[dir;] resolveDomainMap[db;] domainUsage db}

Calling rebuildDomains writes cleaned domain files to dir but does not modify the database. This makes it a safe first step when performing a sym file clean-up.

Re-enumeration

Re-enumerating a database against a new domain requires performing the following steps for each symbol column:

- Load the column values.

- Resolve the existing indexes against the current domain.

- Convert the resulting symbols into indexes of the new domain.

- Write the updated column values back to disk.

Because the new domain has already been created, the re-enumeration step only modifies individual column files. This means the operation can be performed in parallel, since no shared state is modified.

Warning

The steps in this section perform in-place updates to the database. If interrupted or executed incorrectly, data corruption or loss may occur. This process must be performed during a maintenance window with the database offline. Until re-enumeration and domain replacement are fully complete, the database should be considered inconsistent and must not be accessed.

Re-enumerating a Single Column

A column only needs to be re-enumerated if it is an enumeration type and therefore has an associated domain. We can obtain the current domain name using colDomainName, which we defined earlier:

currDomainName:colDomainName[db;tname;cname]

If currDomainName is null, the column is not an enumeration type and we can exit early. Otherwise, we load the current domain:

currDomain:get db,currDomainName

We must also provide the path to the new domain file (newDomainFile), which may not yet reside within the database directory. We load this file and extract its basename to determine the new domain name:

newDomain:get newDomainFile

newDomainName:last ` vs newDomainFile

At this point we have everything required to re-enumerate the column against the new domain.

{[currDomain;newDomain;newDomainName;vals]

newDomainName!.Q.fu[newDomain?;currDomain vals]

}[currDomain;newDomain;newDomainName;]

This lambda (projection) re-enumerates the column values (vals) in the same way we performed enumeration in the previous section. The steps are:

- Resolve the existing indexes using

currDomain - Locate the new indexes in

newDomain - Apply

.Q.futo avoid repeated lookups - Assign the new domain name using

!

We can apply this operation to every column file using fnCol:

fnCol[db;tname;cname;] {[currDomain;newDomain;newDomainName;vals]

newDomainName!.Q.fu[newDomain?;currDomain vals]

}[currDomain;newDomain;newDomainName;]

Putting everything together gives the complete function:

// Re-enumerate a column against a new domain across all partitions

reenumerateCol:{[db;tname;cname;newDomainFile]

if[not null currDomainName:colDomainName[db;tname;cname];

currDomain:get db,currDomainName;

newDomain:get newDomainFile;

newDomainName:last ` vs newDomainFile;

fnCol[db;tname;cname;] {[currDomain;newDomain;newDomainName;vals]

newDomainName!.Q.fu[newDomain?;currDomain vals]

}[currDomain;newDomain;newDomainName;]

];

}

This function allows us to re-enumerate a single column. While uncommon, it can be useful when columns within the same table are enumerated against different domains or when re-enumeration needs to be performed incrementally.

Re-enumerating a Table

In most databases, the same domain is used for all symbol columns within a table.

We can therefore define reenumerateTab to re-enumerate all enumeration-type columns (returned by listEnumCols from dbm.q) across all partitions of a table:

// Re-enumerate all enumeration columns in a table

reenumerateTab:{[db;tname;newDomainFile]

reenumerateCol[db;tname;;newDomainFile] peach listEnumCols[db;tname];

}

For databases that use a single global domain (the most common case), we can extend this to all tables:

// Re-enumerate every table in the database

reenumerateAll:{[db;newDomainFile]

reenumerateTab[db;;newDomainFile] peach listTabs db;

};

Replacing the Old Sym Files

After re-enumeration, the updated column files reference the new domain file(s). However, these files are not yet located in the database root directory.

The final step is therefore to:

- Remove or archive the existing sym file(s).

- Move the rebuilt sym file(s) into the database root.

This can be done at the system level. For example, on Linux:

mv /path/to/database/oldSymFile /path/to/archive/oldSymFile

mv /path/to/newSymFile /path/to/database/newSymFile

Once the new sym file is in place, the database can be restarted and will load the rebuilt domain.

Renaming a Domain

Renaming a domain is not a common operation, but it can be useful in situations such as changes to naming conventions or schema refactoring.

Renaming a domain requires two main steps:

- Create a copy of the existing domain file using the new name.

- Re-enumerate any columns currently enumerated against the old domain so that they reference the new domain.

The first step is simply a filesystem copy. The dbm.q library already provides an internal helper function copy for this purpose.

copy[`:db/currSym;`:db/newSym]

The second step is slightly more involved.

Re-enumerating the entire database cannot be done using reenumerateAll, because a database may contain multiple domains. Calling reenumerateAll would re-enumerate every enumeration column against the new domain, regardless of which domain it currently uses.

Instead, we need stricter versions of the re-enumeration functions that only operate on columns enumerated against a specific domain.

We will therefore introduce reenumerateColFrom, reenumerateTabFrom, and reenumerateAllFrom. These functions behave similarly to the previously defined re-enumeration functions, but they only operate on columns whose current domain matches the specified domain.

reenumerateColFrom

Compared with reenumerateCol, this function has two differences:

- It takes an additional parameter

currDomainName. - It checks that the column is currently enumerated against this domain before performing the re-enumeration.

// Re-enumerate a column from a specific domain to a new domain

reenumerateColFrom:{[db;tname;cname;currDomainName;newDomainFile]

if[currDomainName=colDomainName[db;tname;cname];

// same as reenumerateCol ..

];

}

Since reenumerateCol and reenumerateColFrom share most of their logic, we can refactor the common functionality into a helper function.

Shared Re-enumeration Helper

reenumerateCol0:{[db;tname;cname;currDomainName;newDomainFile]

currDomain:get db,currDomainName;

newDomain:get newDomainFile;

newDomainName:fs.basename newDomainFile;

fnCol[db;tname;cname;] {[currDomain;newDomain;newDomainName;vals]

newDomainName!.Q.fu[newDomain?;currDomain vals]

}[currDomain;newDomain;newDomainName;];

}

This helper performs the actual re-enumeration and is used by both variants of the function.

The original reenumerateCol can now be simplified:

reenumerateCol:{[db;tname;cname;newDomainFile]

if[not null currDomainName:colDomainName[db;tname;cname];

reenumerateCol0[db;tname;cname;currDomainName;newDomainFile]

];

}

And the stricter version becomes:

reenumerateCol:{[db;tname;cname;currDomainName;newDomainFile]

if[currDomainName=colDomainName[db;tname;cname];

reenumerateCol0[db;tname;cname;currDomainName;newDomainFile]

];

}

Re-enumerating Tables and Databases

Because reenumerateTab and reenumerateAll are implemented in terms of reenumerateCol, we can define reenumerateTabFrom and reenumerateAllFrom in the same way using reenumerateColFrom.

// Re-enumerate all enumeration columns in a table from a given domain

reenumerateTabFrom:{[db;tname;currDomainName;newDomainFile]

reenumerateColFrom[db;tname;;currDomainName;newDomainFile] peach listEnumCols[db;tname];

};

// Re-enumerate every table in the database from a given domain

reenumerateAllFrom:{[db;currDomainName;newDomainFile]

reenumerateTabFrom[db;;currDomainName;newDomainFile] peach listTabs db;

};

We can now re-enumerate only the appropriate columns across the entire database:

reenumerateAllFrom[db;currName;newFile]

Final Renaming Function

Combining these steps, we arrive at the final renaming function:

// Rename a (symbol) domain

renameDomain:{[db;currName;newName]

currFile:.Q.dd[db;currName];

newFile:.Q.dd[db;newName];

copy[currFile;newFile];

reenumerateAllFrom[db;currName;newFile];

}

This function deliberately does not delete the original domain file. Keeping the old file temporarily allows for recovery if anything goes wrong during the process.

Once the database has been validated, the old domain file can be safely archived or removed.

Conclusion

The sym file is a fundamental component of a KDB+ database. By enabling efficient symbol enumeration, it provides significant improvements in both storage efficiency and query performance. However, these benefits come with operational considerations. Without proper management, sym files can grow unnecessarily large, increasing startup times and memory usage while potentially introducing avoidable complexity into the system.

In this post we explored several techniques for managing sym files more effectively. These included identifying when symbol enumeration is appropriate, converting columns away from enumeration when it no longer provides benefit, renaming domains safely, and cleaning unused values from a sym file. Together, these techniques provide a practical toolkit for maintaining healthy and efficient symbol domains over the lifetime of a database.

As with many aspects of KDB+ database maintenance, prevention is often easier than correction. Thoughtful schema design, careful consideration of symbol cardinality, and periodic maintenance can prevent excessive sym file growth before it becomes a problem. When issues do arise, the techniques described here can help restore order while minimising risk to the underlying data.

Ultimately, a well-maintained sym file contributes directly to faster startup times, lower memory usage, and more predictable system behaviour — all of which are essential for reliable production KDB+ systems.

Recursion vs Iteration in Q/KDB+

Mathematically, recursion and iteration both describe the repeated application of a rule to successive results. In programming, they often produce identical outputs and can frequently be used to express the same algorithms.

However, they are not equivalent in how they are evaluated. The difference lies in how each approach is executed internally — particularly in how state is managed and how the call stack (or lack of it) is used. These implementation details can have significant implications for performance, memory usage, and even correctness in non-trivial cases.

In Q/KDB+, where iteration is a first-class concept and functional constructs are deeply embedded in the language, the distinction becomes especially important. This blog explores the similarities between recursion and iteration, and more importantly, the practical differences in how recursive and iterative functions are defined and executed in Q.

What is a Recursive Function

A recursive function is one that calls itself within its own body.

A recursive algorithm works by reducing a problem to a smaller (or simpler) version of itself. This process continues until a point is reached where the answer is known directly. This stopping condition is referred to as the base case.

Without a base case, the function would continue calling itself indefinitely — or more realistically, until the program exhausts the call stack and crashes.

When designing a recursive algorithm, there are two key components to consider:

- Problem reduction: How can the original problem be transformed into a simpler instance of the same problem?

- Base case: At what point can recursion stop and begin returning results back up the call stack?

Example – Summing a List of Numbers

Suppose we are given a list of numbers and want to compute their sum.

Note

This is a contrived example, since Q already provides the built-in function

sum. The purpose here is purely illustrative.

We can define the following recursive function:

// Sum a list of numbers

sumList:{[list]

$[0<count list;

(first list)+sumList 1_list;

0

]

}

And use it as follows:

q)sumList 1 2 3 4 5

15

The function first checks whether the list contains any elements using count. This serves as the base case. If the list is empty (count of the list is zero), the function returns 0.

Otherwise, the function:

- Takes the first element (

first list) - Recursively calls

sumListon the remainder of the list (1_listdrops the first element) - Adds the first element to the result of the recursive call

Visualisation

To understand what happens internally, consider:

sumList 1 2 3 4 5

-> 1 + sumList 2 3 4 5

-> 2 + sumList 3 4 5

-> 3 + sumList 4 5

-> 4 + sumList 5

-> 5 + sumList ()

-> 0

<- 0

<- 5 + 0 = 5

<- 4 + 5 = 9

<- 3 + 9 = 12

<- 2 + 12 = 14

<- 1 + 14 = 15

At the top level, we begin with 1 + sumList 2 3 4 5. However, the addition cannot be performed until sumList 2 3 4 5 has been evaluated.

What happens behind the scenes is that each pending addition (1 +, 2 +, 3 +, …) is stored on the call stack while deeper recursive calls are evaluated. Once the base case is reached and 0 is returned, the function begins to unwind, resolving each stored operation in reverse order.

Each recursive call therefore consumes stack space to store:

- The function arguments

- The return address

- Any intermediate state required to complete the computation

This is the primary disadvantage of naive recursion: stack usage grows linearly with input size. For sufficiently large inputs, this can lead to stack overflow, whereas an iterative approach may avoid this cost.

Improvements to sumList

There are two small improvements we can make to sumList.

1. Improve the Base Case

In the original version, the base case occurs when the list is empty. However, we can eliminate one recursive step by using a slightly stronger base case: if the list contains a single element, we simply return that element.

sumList:{[list]

$[1<count list;

(first list)+sumList 1_list;

first list

]

}

This avoids the final recursive call on an empty list.

2. Use .z.s for Self-Reference

Q provides the built-in .z.s, which refers to the currently executing function. Using .z.s, we can avoid hard-coding the function name inside its own definition:

sumList:{[list]

$[1<count list;

(first list)+.z.s 1_list;

first list

]

}

Here, .z.s replaces the explicit call to sumList. This has a practical advantage: if the function is later renamed or assigned to a different symbol, the recursive call does not need to be updated. The function becomes self-contained and name-independent. Using .z.s is especially helpful if the function is passed around anonymously or stored in different variables.

Why Use Recursive Algorithms?

The main advantage of recursion is clarity. Recursive solutions often mirror the structure of the problem itself, which can make them easier to design, reason about, and verify.

If input sizes are small, or performance constraints are not critical, recursion can be an elegant and expressive choice. However, when working with large datasets, especially in a performance-sensitive environment like Q/KDB+, the cost of stack growth becomes an important consideration.

What is an Iterative Function

An iterative function is one that repeatedly applies a rule to successive results until some terminating condition is reached.

With each iteration, the intermediate result moves closer to the final answer. Once the input is exhausted (or the stopping condition is met), the computation terminates and the result is returned.

In some programming languages, particularly Lisp dialects, iteration can look like recursion. A function may call itself, but if that call appears in tail position (i.e. its result is returned directly), the compiler/interpreter can apply tail call optimisation (TCO).

With TCO, the runtime does not allocate a new stack frame for the recursive call. Instead, it reuses the current frame and updates the state. Although the code is written recursively, it executes as an iterative process.

Q/KDB+, however, takes a different approach. Iteration is explicit and built into the language via higher-order functions such as:

over(/)scan(\)

These constructs express iteration directly, without relying on recursion.

Example

Returning to the earlier example of summing a list of numbers, we can define an iterative version:

sumList:({x+y}/)

And use it as before:

q)sumList 1 2 3 4 5

15

Here:

{x+y}is a small binary lambda.- Applying

/transforms it into an iterator using over. - The surrounding parentheses ensure the function is treated as unary, since

/is overloaded and also has a binary form.

In this case, / acts as a left fold (accumulate). Iteration stops once all elements in the list have been consumed.

Visualisation

To understand what happens conceptually:

sumList 1 2 3 4 5

-> {x + y}/ 1 2 3 4 5

-> {1 + y}/ 2 3 4 5

-> {1 + 2}/ 3 4 5

-> {3 + 3}/ 4 5

-> {6 + 4}/ 5

-> {10 + 5}/ ()

-> 15

Within the lambda:

xrepresents the accumulated value so far.yrepresents the next element of the list.

On the first step, there is no prior accumulated value, so x is set to the first element (1). Each subsequent iteration combines the accumulated result with the next value and updates x accordingly.

Unlike recursion, there is no growing chain of deferred operations waiting on the call stack. Only the current accumulated value needs to be retained.

Improvements to sumList

The explicit lambda {x+y} helps illustrate what is happening, but we can simplify further:

sumList:(+/)

This is exactly how sum was historically defined.

Note

From version 4.0,

sumis implemented internally to allow implicit parellisation.

The related operator scan (\) performs the same iteration but returns all intermediate accumulated results:

q)(+\) 1 2 3 4 5

1 3 6 10 15

// sums is equivalent

q)sums

(+\)

q)sums 1 2 3 4 5

1 3 6 10 15

Where / returns only the final result, \ returns the entire sequence of intermediate states.

Why Use Iterative Algorithms?

An iterative approach stores only the current state (for example, the accumulated value in sumList). It does not require one stack frame per element, and therefore has constant stack usage.

In Q/KDB+, iteration via / and \ is typically:

- More memory-efficient

- Faster

- More idiomatic

Recursive solutions can sometimes feel more direct when modelling a problem structurally. However, for large inputs or performance-sensitive workloads, which are common in KDB+ environments, explicit iteration is generally preferred.

Comparing Performance of Recursive & Iterative Algorithms

sumList

Let us compare the recursive and iterative implementations of sumList:

// Create list

q)list:til 1000

// Results equal

q)sumListRecursive list

499500

q)sumListIterative list

499500

// Time and sapce

q)\ts:1000 sumListRecursive list

234 5468128

q)\ts:1000 sumListIterative list

0 560

Both implementations produce the same result. However, the performance characteristics differ dramatically.

The recursive version:

- Takes significantly longer to execute

- Allocates substantially more memory

By contrast, the iterative version using / is both faster and far more memory-efficient.

The difference in memory usage reflects stack growth in the recursive implementation. Each recursive call consumes additional stack space, whereas the iterative version maintains only the current accumulated state.

If we increase the size of the input, the problem becomes even clearer:

q)list:til 10000

q)sumListRecursive list

'stack

For sufficiently large inputs, the recursive implementation exhausts the stack and fails. The iterative version does not suffer from this limitation.

Confirming That Q Does Not Apply Tail Call Optimisation

We can further confirm that Q does not perform tail call optimisation (TCO) by testing a tail-recursive version of sumList.

sumListRecursive:{sumListAcc[x;0];}

sumListAcc:{[list;acc]

$[0<count list;

.z.s[1_list; acc+first list];

acc

]

}

Although this function is structurally tail-recursive (the recursive call is in tail position) it still overflows the stack for large inputs:

q)list:til 10000

q)sumListRecursive list

'stack

If Q implemented TCO, this version would execute in constant stack space. The fact that it still fails demonstrates that Q does not eliminate stack frames for tail calls.

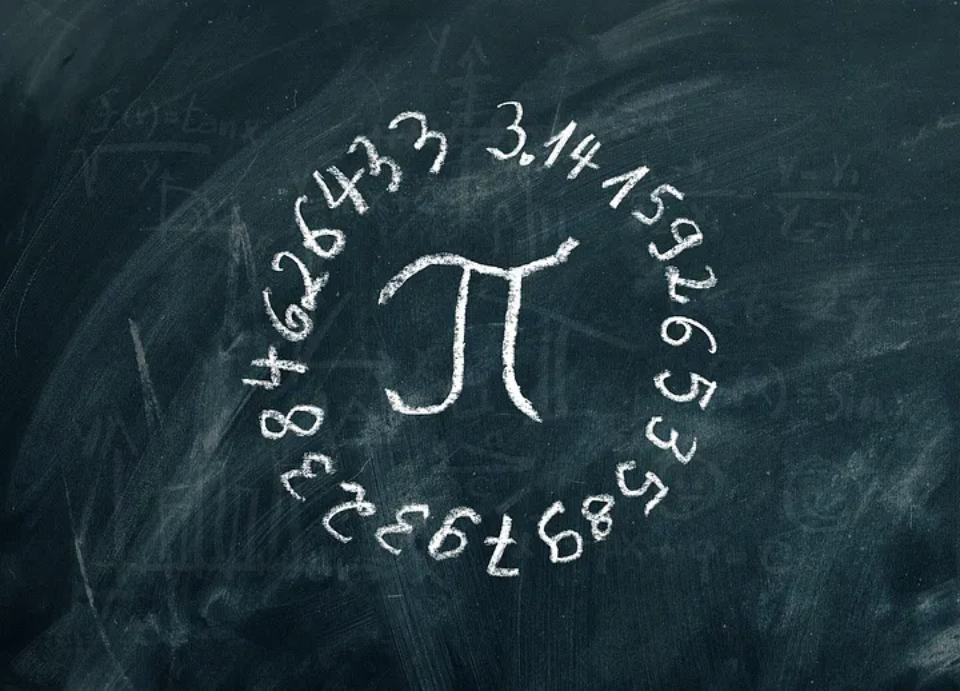

Factorial

The factorial of a number n, denoted \(n!\), is defined as the product of all positive integers less than or equal to n:

\[ n! = n \times (n - 1) \times (n - 2) \times … \times 1 \]

By definition, \(0! = 1\).

This mathematical definition translates directly into a recursive algorithm:

factorialRecursive:{[n] $[n>1; n*.z.s n-1; 1]};

If n is greater than 1, we multiply n by the factorial of n-1. Otherwise, we return 1.

The iterative version computes the product of all integers from 1 to n:

factorialIterative:{[n] (*/) 1+til n};

Here:

til nproduces0 1 2 ... n-11+til nshifts this to1 2 ... n*/performs an over using multiplication

Comparison

// Results equal

q)factorialRecursive 20

2432902008176640000

q)factorialIterative 20

2432902008176640000

// Time and sapce

q)\ts:100000 factorialRecursive 20

234 1728

q)\ts:100000 factorialIterative 20

43 672

Both implementations produce the same result. However, as with sumList, the iterative version is:

- Faster

- More memory-efficient

Even for a relatively small input such as 20, the recursive version allocates more memory due to stack growth.

Fibonacci sequence

The Fibonacci sequence is defined by the recurrence:

\[ F_n = F_{n - 1} + F_{n - 2}, \quad n > 1 \]

with base cases:

\[ F_0 = 0, \quad F_1 = 1 \]

Because the definition itself is recursive, it translates directly into a recursive function:

fibRecursive:{[n] $[n>1; .z.s[n-1]+.z.s n-2; n]};

If n > 1, we sum the two preceding Fibonacci numbers. Otherwise, we return n, which correctly handles the base cases 0 and 1.

Iterative Form

The iterative version is less obvious.

Since each term depends only on the previous two, we can maintain a state consisting of the last two values. Starting with:

q)x:0 1

The next term is simply:

q)sum x

1

To move forward, we update the state by:

- Dropping the first element

- Appending the new sum

Conceptually:

// 1st iteration

q)show x:(x 1;sum x)

1 1

// 2nd iteration

q)show x:(x 1;sum x)

1 2

// 3rd iteration

q)show x:(x 1;sum x)

2 3

// 4th iteration

q)show x:(x 1;sum x)

3 5

..

At each step, x always holds the two most recent Fibonacci numbers.

Using the do Form of /

If we want the 10th Fibonacci number, we must iterate \(n − 1\) times, since the initial state already contains the first two terms (0 1).

The do form of / takes:

- A fixed number of iterations

- An initial state

We can compute:

q){(x 1;sum x)}/[9;0 1]

34 55

This returns the 9th and 10th Fibonacci numbers. To obtain the 10th term:

q)last {(x 1;sum x)}/[9;0 1]

55

Handling the Edge Case

If \(n = 0\), we should return 0. However:

q)last {(x 1;sum x)}/[-1;0 1]

1

When the iteration count is zero or negative, / simply returns the initial state (0 1). Taking last therefore returns 1, which is incorrect for \(F_0\).

We handle this explicitly:

fibIterative:{[n] $[n<1; n; last {(x 1;sum x)}/[n-1;0 1]]};

Comparison

// Results equal

q)fibRecursive 25

75025

q)fibIterative 25

75025

// Time and sapce

q)\ts:100 fibRecursive 25

2424 2512

q)\ts:100 fibIterative 25

0 560

The iterative version is dramatically more efficient.

This difference is not just due to stack usage.

The naive recursive Fibonacci implementation performs massive recomputation. For example:

fib[5]

-> fib[3] + fib[4]

-> (fib[1] + fib[2]) + (fib[2] + fib[3])

-> ..

The same values are recalculated repeatedly:

fib[3]appears multiple timesfib[2]appears multiple times- and so on

This leads to exponential time complexity.

By contrast, the iterative version computes each Fibonacci number exactly once, resulting in linear time complexity.

Exponentiation

Exponentiation is repeated multiplication of a base b by itself n times:

\[ b^n = b \times b \times … \times b \quad \text{(n times)} \]

with the base case:

\[ b^0 = 1 \]

A naive implementation would require \(O(n)\) multiplications. However, we can use exponentiation by squaring, which reduces the complexity to \(O(\log n)\):

\[ \begin{align*} b^n &= b^\frac{n}{2} \times b^\frac{n}{2} \quad \text{if n is even} \\ b^n &= b \times b^{n - 1} \quad \text{if n is odd} \end{align*} \]

Recursive Implementation

This definition translates naturally into a recursive function:

expRecursive:{[b;n]

$[

n=0; 1;

0=n mod 2; {x*x} .z.s[b;n div 2];

b*.z.s[b;n-1]

]

};

- If \(n = 0\), return

1 - If n is even, compute \(b^\frac{n}{2}\) and square it

- If n is odd, compute \(b \times b^{n - 1}\)

Because each recursive step roughly halves n, the recursion depth is \(O(\log n)\).

Iterative Implementation (while Form of /)

We can implement the same logic iteratively using the while form of /.

expIterative:{[b;n]

last {

b:x 0; n:x 1; a:x 2;

$[0=n mod 2;

(b*b; n div 2; a);

(b; n-1; b*a)

]

}/[{0<x 1}; (b;n;1)]

};

Here:

- The state is a list

(b; n; a)where:bis the current basenis the remaining exponentais the accumulated result

- The predicate

{0<x 1}checks whether \(n > 0\) - On each iteration:

- If

nis even, we squareband halven - If

nis odd, we multiply the accumulator and decrementn

- If

This mirrors the recursive structure closely, but expresses it as an explicit state transition.

Comparison

// Results equal

q)expRecursive[2;10]

1024

q)expIterative[2;10]

1024

// Time and sapce

q)\ts:100000 expRecursive[2;10]

286 928

q)\ts:100000 expIterative[2;10]

402 928

Interestingly:

- Both implementations use the same amount of memory.

- The recursive version is slightly faster in this case.

This differs from earlier examples. Why?

Because:

- Recursion depth is only \(O(\log n)\)

- There is no exponential recomputation

- The recursive structure is compact and direct

Here, recursion is not inherently inefficient.

A More Idiomatic Iterative Version

The previous iterative implementation was intentionally written to mirror the recursive algorithm as closely as possible.

However, in Q we can write a much simpler version:

expIterativeBetter:{[b;n] (*/) n#b};

This constructs a list of n copies of b and multiplies them using */.

While this version:

- Allocates a list of length

n - Has \(O(n)\) time complexity

it is still highly optimised in Q and performs very well:

// Time and sapce

q)\ts:100000 expIterativeBetter[2;10]

26 960

It uses slightly more memory due to n#b, but benefits from highly optimised vector operations.

Conclusion

Recursion and iteration are mathematically equivalent, but in Q/KDB+ they are not equivalent in execution.

From the examples we saw:

- Naive recursion grows the stack linearly (

sumList). - Tail recursion does not help, because Q does not implement tail call optimisation.

- Recursive Fibonacci is inefficient both due to stack growth and repeated recomputation.

- Recursive exponentiation performs well because its depth is logarithmic.

- Vectorised primitives are typically the most efficient approach.

The key lesson is not simply that iteration is faster than recursion. Performance depends on:

- Algorithmic complexity

- Recursion depth

- How well the solution maps to Q’s built-in vector operations

In practice, idiomatic Q favours explicit iteration (/, \) and vector primitives. Recursion can be elegant and expressive, but it must be used with an understanding of its stack behaviour and performance implications.

Writing efficient Q is ultimately about choosing the execution model that fits the problem — not just the mathematical definition.

Database Maintenance in KDB+

KDB+ requires ongoing maintenance as datasets evolve and schemas change. KX provides dbmaint.q — a widely-used utility for partitioned databases. This blog walks through the original functions and re-implements them with improved efficiency, readability, and use of more modern language features.

dbm KDB-X Module

We’ll make this script an importable module using the KDB-X module system. To use the script as a module:

- Copy or download the

dbm.qscript and place it within your module search path (e.g./home/user/.kx/mod/qlib/dbm.q). - Define the module namespace in your KDB session:

dbm:use`qlib.dbm // Assuming dbm.q is within .../.kx/mod/qlib/

You can find out more information about KDB-X modules in my other blog.

Notable Improvements

The modernised dbm.q provides:

- Clearer function and variable names.

- Supports splayed, partitioned, and segmented databases.

- Nested column type support.

- Parallelisation for large datasets.

Creating a Test Database

To demonstrate the functionality, we’ll first set up a small test environment. This will include both a splayed database and a partitioned database, each containing a simple trade table.

// Define a sample trade table

trade:([]

time:5#.z.P;

sym:`IBM`AMZN`GOOGL`META`SPOT;

size:1 2 3 4 5;

price:10 20 30 40 50f;

company:(

"International Business Machines Corporation";

"Amazon.com, Inc.";

"Alphabet Inc.";

"Meta Platforms, Inc.";

"Spotify Technology S.A."

);

moves:3 cut -5+15?10

);

// Create a splayed DB

`:splayDB/trade/ set .Q.en[`:splayDB;trade];

// Create a partitioned database (two partitions)

{[db;dt;tname]

.Q.dd[db;dt,tname,`] set .Q.en[db;get tname]

}[`:partDB;;`trade] each 2026.02.03 2026.02.04;

Listing Column Names

Let’s start with something simple: returning the list of column names from a table.

In a splayed table, the column names are stored in the .d file inside the table’s directory (tdir). Reading this file gives us the column list directly.

get tdir,`.d

For example:

q)get `:splayDB/trade,`.d

`time`sym`size`price`company`moves

To make this reusable, we can wrap the logic in a helper function, getColNames. This function checks whether the .d file exists and, if so, reads it. Otherwise, it returns an empty symbol list.

getColNames:{[tdir] $[count key .Q.dd[tdir;`.d]; get tdir,`.d; `$()]};

q)getColNames `:splayDB/trade

`time`sym`size`price`company`moves

Wrapping for General Use

Public functions are intended to work across different database layouts (splayed, partitioned, segmented). To achieve this, we usually wrap helper functions so they can be applied to every partition where needed.

For listing column names, however, it’s enough to read from just one partition (assuming schema consistency across partitions). Here’s the public version:

// List all column names of the given table

listCols:{[db;tname] getColNames last allTablePaths[db;tname]};

where

db- Path to database root.tname- Table name.

allTablePaths[db;tname] retrieves all paths to the table within the database. We’ll define this utility in the next section.

Examples

q)listCols[`:splayDB;`trade]

`time`sym`size`price`company`moves

q)listCols[`:partDB;`trade]

`time`sym`size`price`company`moves

Why not just use cols?

The built-in cols function works perfectly well when a table is already mapped into memory. However, listCols avoids having to map a database into memory unnecessarily.

Listing Table Paths

When dealing with different database layouts, the path to a table depends on the type of database:

- Splayed: each table has a single directory in the database root.

- Partitioned (or segmented): the same table name usually appears once per partition.

Our “base” functions, such as getColNames, operate on a single splayed table path. To support partitioned and segmented databases, we first need a way to collect all table paths within a given database. This is the role of allTablePaths.

Inspecting the Database Root

We can start by listing the contents of a database root (db) using key:

// Files/directories in a splayed database

q)key `:splayDB

`s#`sym`trade

// Files/directories in a partitioned database

q)key `:partDB

`s#`2026.02.03`2026.02.04`sym

If key returns an empty list, the database does not exist and we can return early:

if[0=count files:key db; :`$()];

Identifying Partitions

Partition directories always start with a digit, since partition values must be of an integral type. We can detect these with a simple regex:

where files like "[0-9]*"

For a splayed database this yields nothing:

q)where key[`:splayDB] like "[0-9]*"

`long$()

For a partitioned database we get the indices of partition directories:

q)where key[`:partDB] like "[0-9]*"

0 1

Filtering files down to only partitions looks like:

files:key db;

files@:where files like "[0-9]*";

Handling Splayed vs Partitioned

If no partitions are found, we must have a splayed database. In that case, just return the single table path (wrapped in enlist to ensure the result is always a list):

enlist .Q.dd[db;tname]

If partitions exist, construct paths for each partition:

(.Q.dd[db;] ,[;tname]@) each files

Handling Segmented Databases

Segmented databases introduce one additional wrinkle: the root contains a file par.txt listing the paths of all underlying partitioned databases. We can handle this by reading the file and recursively calling our function for each listed path:

if[any files like "par.txt"; :raze .z.s[;tname] each hsym `$read0 .Q.dd[db;`par.txt]];

Final Cleanup

Up to this point, we’ve blindly appended the table name to each partition path. To avoid returning non-existent directories, we filter to keep only paths that actually exist:

paths where 0<(count key@) each paths

// Get all paths to a table within a database

allTablePaths:{[db;tname]

if[0=count files:key db; :`$()];

if[any files like "par.txt"; :raze .z.s[;tname] each hsym `$read0 .Q.dd[db;`par.txt]];

files@:where files like "[0-9]*";

paths:$[count files; (.Q.dd[db;] ,[;tname]@) each files; enlist .Q.dd[db;tname]];

paths where 0<(count key@) each paths

};

q)allTablePaths[`:splayDB;`trade]

,`:splayDB/trade

q)allTablePaths[`:partDB;`trade]

`:partDB/2026.02.03/trade`:partDB/2026.02.04/trade

q)allTablePaths[`:nonExistingDB;`trade]

`symbol$()

q)allTablePaths[`:splayDB;`nonExistingTable]

`symbol$()

q)allTablePaths[`:partDB;`nonExistingTable]

`symbol$()

Adding a New Column

To add a column to a table, create a helper add1Col that adds it to a single splayed directory:

// Add a column to a single splayed table

add1Col:{[tdir;cname;default]

if[not cname in colNames:getColNames tdir;

len:count get tdir,first colNames;

.[.Q.dd[tdir;cname];();:;len#default];

@[tdir;`.d;,;cname]

]

};

Line-by-line breakdown:

- Checks that the new column name does not already exist within the table.

- Get the count/length of the table.

- Create the new column file, filling it with the correct number of default values to match the table count.

- Add the new column name to the

.dfile.

The addCol Wrapper

Our wrapper function will do the following:

1. Validate the Column Name

A name is valid if it:

- adheres to Q name formatting (no spaces, special chars, etc.); and

- is not a reserved word.

isValidName:{[name] (name=.Q.id name) and not name in .Q.res,key`.q};

validateName:{[name] if[not isValidName name; '"Invalid name: ",string name]};

We use .Q.id to sanitise the name and, if it changed, then the given name did not adhere to Q name formatting. If a name is invalid, we reject it and signal an error.

2. Handle Symbol Enumeration

If the new column’s default values are of type symbol, they must be enumerated against the database’s symbol domain before being written to disk.

This is handled by enum:

default:enum[db;domain;default]

where

enum:{[db;domain;vals] $[11h=abs type vals; .Q.dd[db;domain]?vals; vals]};

3. Add the Column Across All Partitions

Finally, we apply add1Col to each table path.

If the database is partitioned, this will add the column to every partition directory — in parallel — using peach:

add1Col[;cname;default] peach allTablePaths[db;tname]

Bringing it all together, we have:

addCol:{[db;domain;tname;cname;default]

validateName cname;

default:enum[db;domain;default];

add1Col[;cname;default] peach allTablePaths[db;tname];

};

q)addCol[`:splayDB;`sym;`trade;`side;`b]

q)addCol[`:partDB;`sym;`trade;`side;`b]

The Symbol File

In KDB+, the symbol type is an interned string — meaning that only one copy of each distinct string value is stored in memory, and all references point to that single instance.

On disk, this concept is mirrored through enumeration. Any symbol columns in splayed or partitioned tables must be enumerated against the symbol file (often referred to as sym). This file stores a global list of unique symbols used across the database.

When enumerating, KDB+ converts symbol values into integer indices corresponding to their positions in the symbol file. This ensures consistency and compactness across tables.

If a symbol column is not enumerated before saving, KDB+ will raise an error — hence why enumeration is an essential part of the column addition process.

Deleting a Column

To delete a column, we only need to remove the column file and update the table metadata accordingly.

The process involves three straightforward steps:

- Confirm that the column exists

cname in colNames:getColNames tdir - Delete the column file:

hdel .Q.dd[tdir;cname] - Update the

.dfile@[tdir;`.d;:;colNames except cname]

Nested Columns

The original dbmaint.q script did not handle nested column types, which require a bit of extra care.

In KDB+, nested columns can be splayed as long as they contain only simple lists (e.g. strings, longs). When a nested column is splayed, it’s actually stored as two files:

- one named after the column itself, and

- another with the same name suffixed by the

#character.

For example, our trade table contains two nested columns — company (a list of strings) and moves (a list of longs):

q)key `:splayDB/trade

`s#`.d`company`company#`moves`moves#`price`size`sym`time

As shown, each nested column (company, moves) has two associated files: the main column file and the hash-suffixed file (company#, moves#).

When adding nested columns, we did not need to explicitly handle this case — KDB+ automatically creates both files when saving a nested column to disk.

However, when deleting a column, we must ensure that the accompanying “hash column” (colname#) is also removed.

We can achieve this by checking if the hash file exists and deleting it:

if[(hname:`$string[cname],"#") in key tdir; hdel .Q.dd[tdir;hname]]

Putting It All Together

We can now define a helper function to delete a column — including nested columns — from a single splayed table:

// Delete a column from a single splayed table

del1Col:{[tdir;cname]

if[cname in colNames:getColNames tdir;

hdel .Q.dd[tdir;cname];

if[(hname:`$string[cname],"#") in key tdir; hdel .Q.dd[tdir;hname]];

@[tdir;`.d;:;colNames except cname]

]

};

If the database is partitioned, we need to repeat this operation for every partition.

To handle that, we define a simple wrapper function delCol that applies del1Col across all partition paths:

delCol:{[db;tname;cname] del1Col[;cname] peach allTablePaths[db;tname];};

q)delCol[`:splayDB;`trade;`side]

q)delCol[`:partDB;`trade;`side]

// Delete nested

q)delCol[`:splayDB;`trade;`moves]

q)delCol[`:partDB;`trade;`moves]

Copying a Column

Copying a column involves three steps:

1. Verify that the column can be copied

- The source column must exist.

- The destination column must not already exist.

(srcCol in colNames) and not dstCol in colNames:getColNames tdir

2. Copy the underlying column files

- For simple columns, this is a single file.

- For nested columns, the corresponding hash file must also be copied.

- The column copy itself is performed at the filesystem level:

- Linux/macOS/Solaris:

cp - Windows:

copy /v /z

- Linux/macOS/Solaris:

A helper flag identifies the operating system:

isWindows:.z.o in `w32`w64;

Next, we define a platform-aware path formatter:

convertPath:{[path]

path:string path;

if[isWindows; path[where"/"=path]:"\\"];

(":"=first path)_ path

};

And a wrapper to invoke the appropriate command:

copy:{[src;dst] system $[isWindows; "copy /v /z "; "cp "]," " sv convertPath each src,dst;};

For nested columns, also copy the hash file:

if[(hname:`$string[srcCol],"#") in key tdir;

copy . .Q.dd[tdir;] each hname,`$string[dstCol],"#"

];

3. Update the table’s metadata (.d file)

@[tdir;`.d;,;dstCol]

The full copy1Col function:

// Copy srcCol → dstCol within a single on-disk table directory

copy1Col:{[tdir;srcCol;dstCol]

if[(srcCol in colNames) and not dstCol in colNames:getColNames tdir;

copy . .Q.dd[tdir;] each srcCol,dstCol;

if[(hname:`$string[srcCol],"#") in key tdir;

copy . .Q.dd[tdir;] each hname,`$string[dstCol],"#"

];

@[tdir;`.d;,;dstCol]

]

};

Apply to All Partitions

The wrapper performs name validation and applies the operation across all table partitions:

copyCol:{[db;tname;srcCol;dstCol]

validateName dstCol;

copy1Col[;srcCol;dstCol] peach allTablePaths[db;tname];

};

q)copyCol[`:splayDB;`trade;`size;`sizeCopy]

q)copyCol[`:partDB;`trade;`size;`sizeCopy]

// Copy nested

q)copyCol[`:splayDB;`trade;`company;`companyCopy]

q)copyCol[`:partDB;`trade;`company;`companyCopy]

Checking if a Column Exists

Determining whether a column exists is straightforward: we simply check whether the column name appears in the table’s .d file, which we access via getColNames.

// Does the given column exist in a single partition directory?

has1Col:{[tdir;cname] cname in getColNames tdir};

For a partitioned table, the presence of a column should be consistent across all partitions.

We therefore apply has1Col to every partition directory and confirm that the result is true for all of them.

// Does the given column exist in all partitions of the table?

hasCol:{[db;tname;cname]

$[count paths:allTablePaths[db;tname]; all has1Col[;cname] peach paths; 0b]

};

Note that we check if we get any paths. If not, we simply return 0b as the table does not exist within the database.

q)hasCol[`:splayDB;`trade;`size]

1b

q)hasCol[`:splayDB;`trade;`nonExistingCol]

0b

q)hasCol[`:splayDB;`nonExistingTab;`size]

0b

Renaming Columns

Renaming a column follows a similar pattern as copying a column:

1. Validating Column Names

We begin by checking that the column we want to rename (old) exists and that the proposed name (new) does not:

(old in colNames) and not new in colNames:getColNames tdir

2. Renaming the Column File

The file-level rename operation uses the OS’s native move command (mv on Unix-like systems, move on Windows).

We wrap this in a helper that handles platform-specific behaviour and path formatting:

rename:{[src;dst] system $[isWindows; "move "; "mv "]," " sv convertPath each src,dst;}

Renaming the column’s data file is then simply:

rename . .Q.dd[tdir;] each old,new;

For nested columns:

if[(hname:`$string[old],"#") in key tdir;

rename . .Q.dd[tdir;] each hname,`$string[new],"#"

];

4. Updating .d

Finally, we update the .d metadata file.

Unlike copying, where we append, renaming requires modifying the existing list while preserving its order:

@[tdir;`.d;:;.[colNames;where colNames=old;:;new]]

The full rename1Col function:

// Rename a column in a single on-disk table directory.

rename1Col:{[tdir;old;new]

if[(old in colNames) and not new in colNames:getColNames tdir

rename . .Q.dd[tdir;] each old,new;

if[(hname:`$string[old],"#") in key tdir;

rename . .Q.dd[tdir;] each hname,`$string[new],"#"

];

@[tdir;`.d;:;.[colNames;where colNames=old;:;new]]

]

};

Apply across all partitions:

// Rename a column across all partitions of a table.

renameCol:{[db;tname;old;new]

validateName new;

rename1Col[;old;new] peach allTablePaths[db;tname];

};

q)renameCol[`:splayDB;`trade;`sizeCopy;`sizeRenamed]

q)renameCol[`:splayDB;`trade;`companyCopy;`companyRenamed]

Reordering Columns

Reorder columns by updating the .d file (no data changes needed):

1. Validating User Input

Before applying a new order, we confirm that every name provided by the user corresponds to an existing column:

if[not all exists:order in colNames:getColNames tdir;

'"Unknown column(s): ","," sv string order where not exists

];

This raises an informative error listing only the invalid names.

2. Constructing the New Order

We reorder the .d file by placing the user-specified columns first, followed by any remaining columns in their original order:

@[tdir;`.d;:;order,colNames except order];

This mirrors the behaviour of xcols: the caller only needs to specify the priority columns, not the full list of column names.

3. Putting It Into a Function

// Reorder the columns in a single database table

reorder1Cols:{[tdir;order]

if[not all exists:order in colNames:getColNames tdir;

'"Unknown column(s): ","," sv string order where not exists

];

@[tdir;`.d;:;order,colNames except order];

};

4. Applying the Reorder Across All Partitions

For partitioned tables, the column order must be updated consistently everywhere:

// Reorder the columns across all partitions of a table

reorderCols:{[db;tname;order] reorder1Cols[;order] peach allTablePaths[db;tname];};

q)getColNames .Q.dd[`:splayDB;`trade]

`time`sym`size`price`company`sizeRenamed`companyRenamed

q)reorderCols[`:splayDB;`trade;`time`sym`price`company]

q)getColNames .Q.dd[`:splayDB;`trade]

`time`sym`price`company`size`sizeRenamed`companyRenamed

Applying a Function To a Column

Another useful operation is being able to apply some function to column data and persisting the updated data. For example, we want to scale the values in a column by 100, so we apply a function that multiplies all values in the column by 100, and then saves these values back into the column file.

We start by checking that the column we want to update actually exists within the table:

cname in getColNames tdir

Next, we load the column values into memory:

oldVal:get tdir,cname;

We only want to do the on-disk update if something actually changed. This could be the values in the column, but also, the attribute on the column (for example when the function we are applying is to set/remove a column attribute). Thus, we store the current attribute for later comparison:

oldAttr:attr oldVal

Apply the function to the column values:

newVal:fn oldVal

Note that the function (fn) is a unary function that takes the column values as its argument and returns the new column values (count of list must be maintained).

Then, we get the attribute of the updated column:

newAttr:attr newVal

Next, check if anything actually changed:

$[oldAttr~newAttr;not oldVal~newVal;1b]

This conditional says: if the attributes changed, return 1b, since we have a change and want to write the update to disk. Otherwise, check if the column values changed, if so, we also want to do the on-disk update.

If the above if-else returns 1b we proceed with the on-disk update:

.[.Q.dd[tdir;cname];();:;newVal]

Putting it All Together

// Apply a function to a single database table

fn1Col:{[tdir;cname;fn]

if[cname in getColNames tdir;

oldAttr:attr oldVal:get tdir,cname;

newAttr:attr newVal:fn oldVal;

if[$[oldAttr~newAttr;not oldVal~newVal;1b];

.[.Q.dd[tdir;cname];();:;newVal]

]

]

};

and the wrapper function:

// Apply a function to a column across all partitions of a table

fnCol:{[db;tname;cname;fn] fn1Col[;cname;fn] peach allTablePaths[db;tname];};

q)get `:splayDB`trade`size

1 2 3 4 5

q)fnCol[`:splayDB;`trade;`size;100*]

q)get `:splayDB`trade`size

100 200 300 400 500

Using fnCol

We can make use of fnCol to derive a few more useful functions:

// Cast a column to a given type

castCol:{[db;tname;cname;typ] fnCol[db;tname;cname;typ$];};

// Set an attribute on a column

setAttr:{[db;tname;cname;attrb] fnCol[db;tname;cname;attrb#];};

// Remove an attribute from a column

rmAttr:{[db;tname;cname] setAttr[db;tname;cname;`];};

castCol casts a column to a new data type. It does this by passing typ$ as the fn argument to fnCol, where typ is the new data type and can be any of the values that can be the left argument of the $ operator when casting (i.e., short, char, or symbol).

setAttr is used to set an attribute on a table column. It does this by passing attrb# as the fn argument to fnCol, where attrb is the attribute to apply (`, `s, `u, `p, `g).

rmAttr is used to remove an attribute from a table column and it simply passed ` to setAttr to achieve this.

Adding Missing Columns

Over time, it is common for a database to accumulate schema drift: earlier partitions may be missing columns that were added later as the schema evolved.

To maintain consistency across the database, it is often necessary to retrofit older partitions so that all partitions share the same set of columns. A practical way to do this is to treat a “good” table — typically from a recent partition — as a schema template, and add any missing columns to older partitions using appropriate default values.

1. Identifying Missing Columns

Given:

good: template table (with the complete schema)tdir: the directory of a table we want to fix

We determine which columns are missing by comparing their columns:

goodCols:cols good

missing:goodCols except getColNames tdir

This produces the list of columns that exist in the good table but not in the target table.

2. Generating Default Values

Each missing column must be added with a correctly typed default value.

We can generate an empty default of the correct type using 0# on a good column:

0#good col

3. Reorder Columns